Table of Contents

The Vision: Beyond "Green" Builds

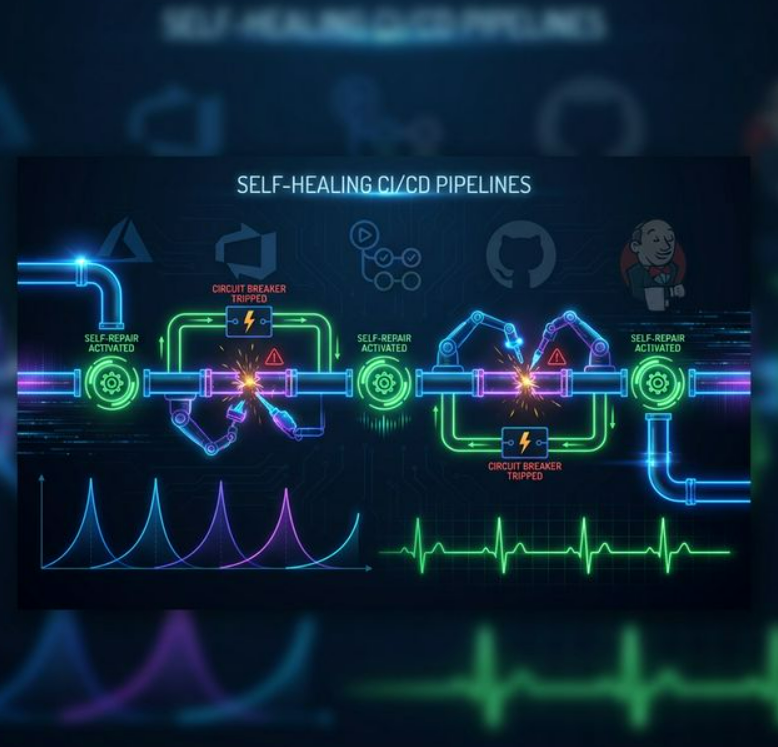

Standard CI/CD pipelines are fragile. A transient network glitch, a full disk, or a minor configuration drift can stall your entire development team. A Self-Healing Pipeline integrates observability and automated remediation directly into the orchestration layer.

The result: pipelines that detect failures, analyse root causes, apply targeted fixes, and retry — all without waking up an engineer at 3 AM.

The Architecture of Resilience

To make this work across Azure DevOps, GitHub Actions, or Jenkins, you need a three-tier logic:

- Detection: Identifying the failure type (Infrastructure vs. Code).

- Analysis: Using logic (or LLMs) to parse logs.

- Remediation: Executing a targeted fix script and retrying.

1. Transient Error Retries — The "Quick Fix"

Many pipeline failures are "flaky" — a timeout fetching an npm package, a momentary DNS blip, or a slow registry response. Instead of a hard fail, implement exponential backoff retries.

continue-on-error combined with a

retry loop script, or dedicated actions like nick-fields/retry.GitHub Actions — Exponential Backoff Retry

jobs:

build:

runs-on: ubuntu-latest

steps:

- name: Install dependencies (with retry)

uses: nick-fields/retry@v2

with:

timeout_minutes: 10

max_attempts: 3

retry_wait_seconds: 30

command: npm ciAzure DevOps — retryCountOnTaskFailure

steps:

- task: Npm@1

inputs:

command: 'ci'

retryCountOnTaskFailure: 3Jenkins — retry() Block

stage('Install') {

steps {

retry(3) {

sh 'npm ci'

}

}

}2. Infrastructure Auto-Provisioning

If a deployment fails because a resource (an S3 bucket, Azure Resource Group, or Kubernetes namespace) is missing or misconfigured, the pipeline should automatically trigger an IaC "reconcile" run before retrying.

Error 404: ResourceNotFound → run

terraform apply or bicep deploy → then retry the app deployment.

Example: Terraform Auto-Reconcile on Failure

- name: Deploy Application

id: deploy

run: ./deploy.sh

continue-on-error: true

- name: Auto-Provision Infrastructure on Failure

if: steps.deploy.outcome == 'failure'

run: |

echo "Deploy failed — reconciling infrastructure..."

cd infra/

terraform init

terraform apply -auto-approve

echo "Re-running deployment..."

./deploy.sh3. Log Parsing & AI Remediation

When a build fails, the pipeline sends the last 50 lines of the error log to an LLM API. The AI identifies the root cause and the pipeline acts on it — all automatically.

The Self-Healing Workflow

- Pipeline step fails → capture last 50 lines of stderr.

- Send logs to LLM (OpenAI / local Llama).

- LLM identifies: missing environment variable.

- Pipeline checks Key Vault / Secrets Manager.

- If found → inject variable → restart the failed job.

Python: AI Log Analyser Script

import subprocess, openai, os, sys

openai.api_key = os.getenv("OPENAI_API_KEY")

def get_last_n_lines(log_file, n=50):

with open(log_file, 'r') as f:

return ''.join(f.readlines()[-n:])

def analyse_and_heal(log_file):

logs = get_last_n_lines(log_file)

resp = openai.ChatCompletion.create(

model="gpt-4",

messages=[

{"role": "system", "content": (

"You are a DevOps assistant. Analyse this CI/CD failure log. "

"Respond ONLY with JSON: "

"{\"cause\": \"...\", \"fix\": \"shell command to fix\", \"can_auto_fix\": true/false}"

)},

{"role": "user", "content": f"Log:\n{logs}"}

],

temperature=0.2

)

import json

result = json.loads(resp.choices[0].message['content'])

print(f"Cause: {result['cause']}")

if result['can_auto_fix']:

print(f"Applying fix: {result['fix']}")

subprocess.run(result['fix'], shell=True, check=True)

print("Fix applied. Retrying pipeline step...")

return True

else:

print("Cannot auto-fix. Alerting team.")

return False

if __name__ == "__main__":

healed = analyse_and_heal(sys.argv[1])

sys.exit(0 if healed else 1)Implementation Across Platforms

| Feature | Azure DevOps | GitHub Actions | Jenkins |

|---|---|---|---|

| Retries | retryCountOnTaskFailure |

nick-fields/retry action |

retry(n) { ... } block |

| Error Handling | condition: failed() jobs |

if: failure() steps |

post { failure { ... } } |

| State Management | Pipeline Variables | Environment Secrets | Global Config |

The Feedback Loop: Monitoring the "Healer"

You cannot "set and forget" a self-healing system. You need to actively track its performance:

MTTR

Does the self-healing actually speed up Mean Time To Recovery?

False Positives

Is the pipeline "fixing" things that aren't actually broken?

Cost

Infinite retry loops can rack up massive cloud bills. Always set limits.

Circuit Breaker

Hard-stop after 2 failed heal attempts. Alert a human. Never loop forever.

Conclusion

Self-healing pipelines are the natural evolution of DevOps maturity. By layering transient retries, IaC auto-provisioning, and AI-powered log analysis — backed by a circuit breaker and solid observability — you build a system that's not just fast, but fundamentally resilient.

The goal isn't to eliminate human engineers. It's to protect their sleep, their focus, and their sanity — reserving human judgment for problems that truly need it.